Hackers are using AI. Here's what you need to know.

Since the release of AI coding agents in late 2025, reverse engineering has shifted. Not incrementally. Fundamentally.

As a cybersecurity company on the front lines, we see it every day. What used to take hours or days of manual exploration and intuition can now be accelerated by AI agents. These agents can navigate complex binaries and extract meaning in seconds.

The game has changed.

The evidence from the front lines

The last two CTFs we participated in last month, at RE//verse and Ph0wn, gave us a clear look at this new reality. The challenges were quickly cracked in a battle of autonomous agents.

The real test wasn't about manual reversing anymore. It came down to:

- Making reverse engineering tools accessible to the agent through MCP.

- Combining those tools effectively.

- Managing a large stock of Claude Code tokens.

While CTFs traditionally challenged a researcher's ability to define a strategy for finding a needle in a haystack, we are now dealing with an entirely new paradigm.

It’s easy to think AI just levels the playing field for beginners. It does lower the barrier to entry. But focusing on the novices misses the real story.

In reality, it is the experienced analysts who truly hold the advantage.

When you give an autonomous agent to a seasoned reverse engineer, the results multiply. They already have the intuition. They know what to ask, where to look, and how to validate what the AI tells them.

Because the AI handles the grueling work of parsing and translating logic, these experts move much faster. They can explore multiple complex paths at once. They scale their investigations to a level we haven't seen before.

AI doesn't replace their expertise. It amplifies it.

This creates a severe asymmetry in our field. Threat actors are already using this amplification to map vulnerabilities at unprecedented speeds. Teams that adopt these tools effectively gain a massive advantage. Those who hesitate will inevitably fall behind.

There is an urgent need to meet attackers at their current level.

The reality of integrating AI into security workflows

It is one thing to acknowledge that Agentic AI is the future of the industry. It is another entirely to implement it safely.

Doing it “right” is harder than it looks

We know professional security teams can’t just plug a public LLM into their daily workflows and call it a day.

We deal with strict constraints. Data cannot leave the organization, whether that means sensitive binaries, proprietary algorithms, in-progress vulnerability research, or internal methodologies. All of this must stay strictly confidential.

In our industry, “doing it right” means working in a highly controlled environment. It requires preventing data leaks and ensuring every reverse engineering investigation remains private, traceable, and reproducible.

This creates immediate tension. The most powerful AI models live in the cloud, and using them directly means giving up control over your data, your prompts, and your results. For mature organizations handling critical software security investigations, that compromise is simply unacceptable.

When AI tools create more chaos than value

Without the right structure, forcing AI agents into your lab creates more problems than it solves.

Picture analysts copy-pasting decompiled code into different web-based chatbots. Context gets lost. Prompts aren't saved. If an analyst leaves the company or shifts to a new project, their entire methodology walks out the door with them.

Instead of empowering researchers, ad-hoc AI integration leads to:

- Fragmented analyses scattered across different, disconnected tools.

- Uncontrolled, invisible data exposure to third-party servers.

- Inconsistent methodologies across your teams, making peer review impossible.

Instead of creating "super analysts," you risk building a system that is harder to manage, secure, and scale. In our experience, this is exactly how organizations lose control of their security posture.

Building a foundation for the future

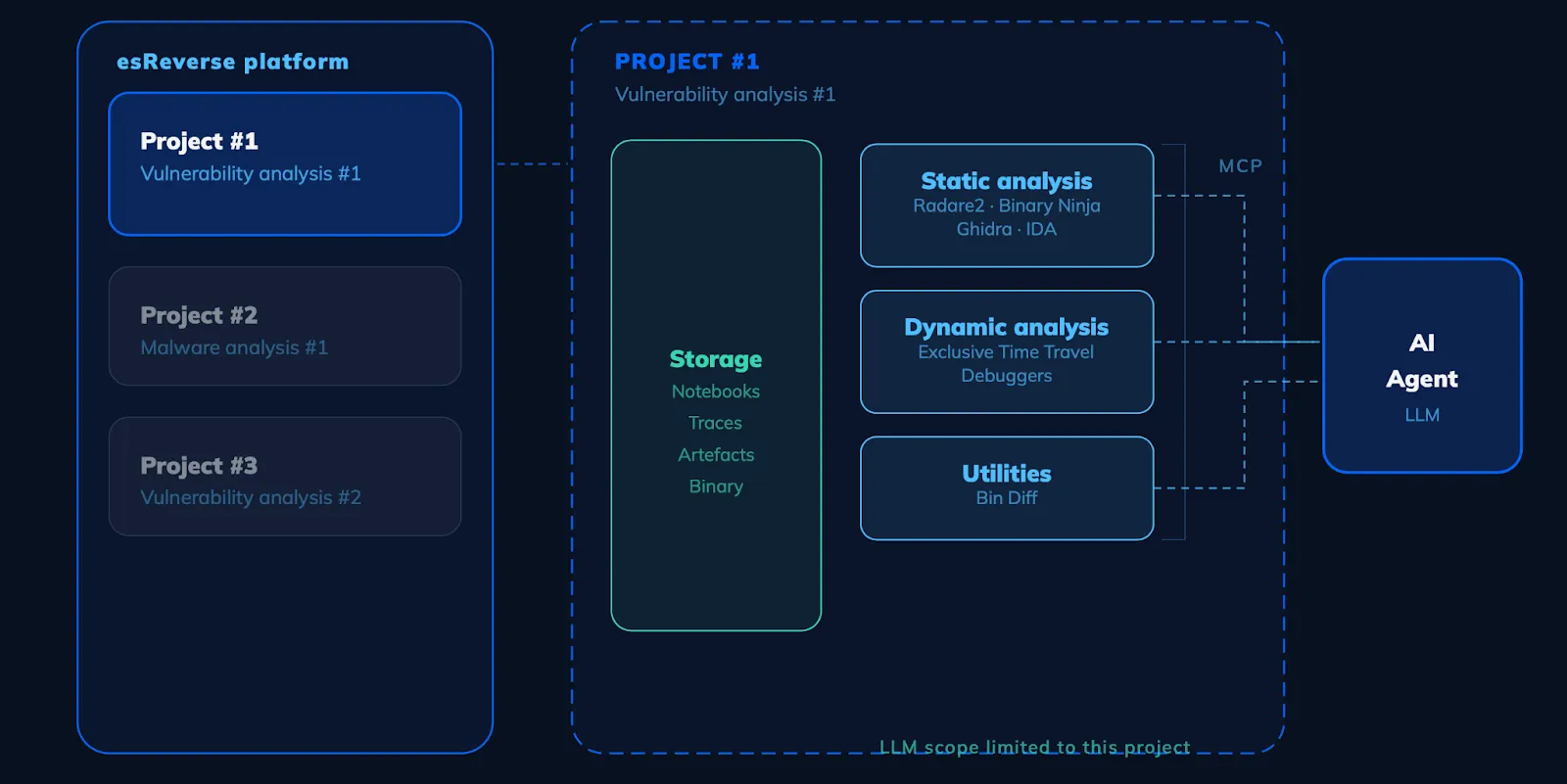

To actually use AI reverse engineering effectively, security teams need more than just API access to a model. They need a dedicated, secure infrastructure.

They need an environment where experts can collaborate on the same investigations, but where data, tools, and results are centralized. Knowledge must be captured and reused automatically.

Crucially, the AI needs to be integrated securely, orchestrated so it accesses only what it is allowed to see for a specific project. This also means having the flexibility to deploy local LLMs when cloud models are too risky.

This isn't just about adding another AI tool to your stack. It's about fundamentally restructuring your reverse engineering workflows so they can scale securely alongside these new technologies.

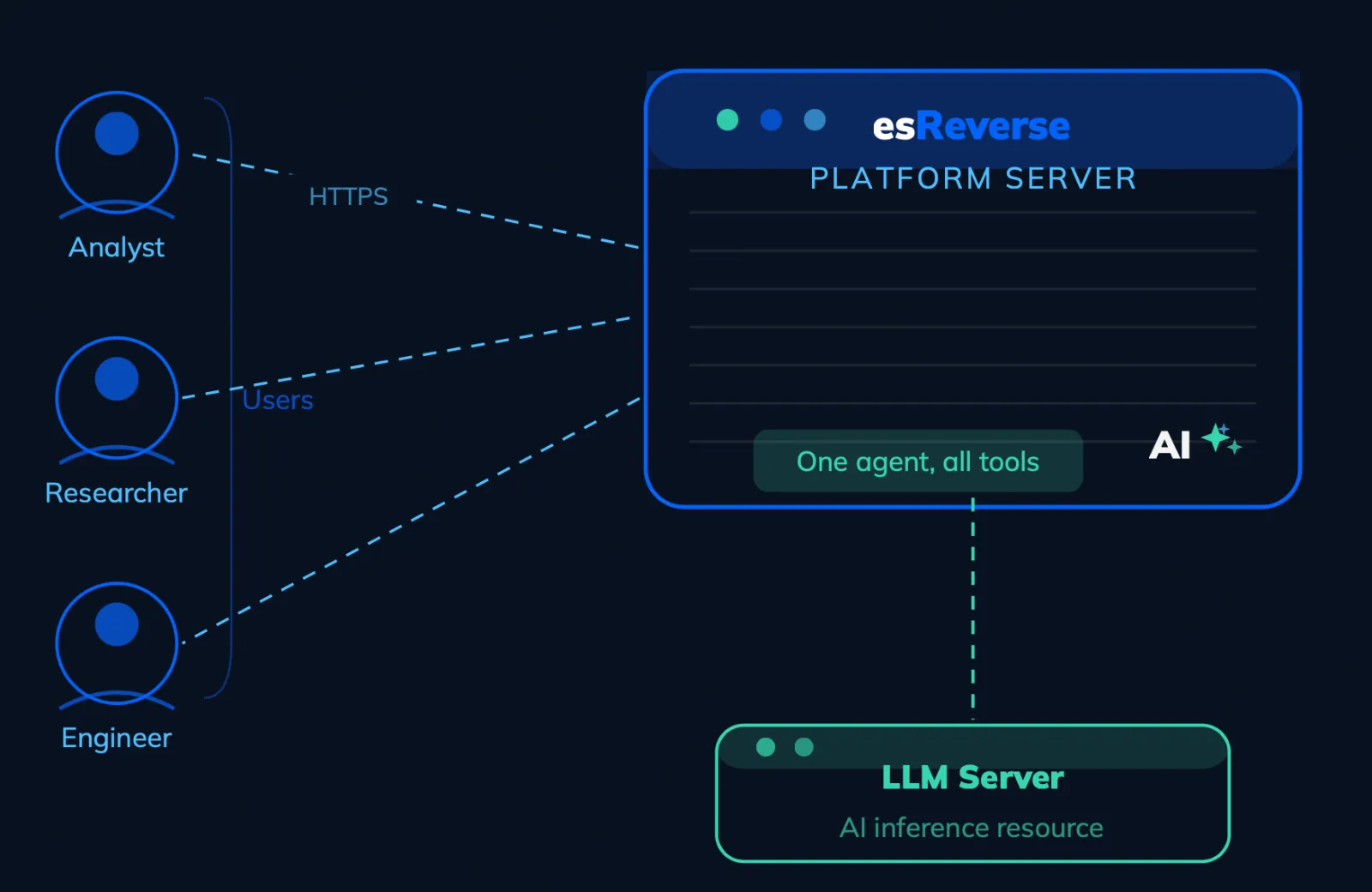

How esReverse secures the AI workflow

At eShard, we anticipated these challenges long before AI agents hit the mainstream. We knew that reverse engineering at scale intrinsically requires structure, collaboration, and reproducibility.

We built esReverse to serve as a central platform for software security investigations. It prevents the chaos of fragmented tools by giving teams a single place to run investigations as structured projects, share datasets, and build internal knowledge over time.

More importantly, it allows organizations to integrate their existing toolchains, and new AI capabilities, while keeping absolute control over their environment. Because the platform remains open by design, users can safely plug in whatever resources they need, whether open-source, commercial, or internal, without sacrificing data privacy.

The choice for security teams

Agentic AI is not just a passing trend; it is a permanent shift in how reverse engineering is executed. The adversaries have already adapted, and they are moving faster than ever.

It is completely valid to be wary of the privacy and operational risks. Exposing your most sensitive workflows to a black-box LLM is a recipe for disaster. But standing still out of fear is no longer a viable strategy.

The organizations that will thrive in this new era aren't the ones rushing to blindly adopt every new AI tool. They are the ones building a secure, structured foundation first. By prioritizing infrastructure and data control, you can turn this overwhelming shift into your team's greatest advantage.

You don't have to choose between keeping your data safe and keeping up with the attackers. With the right platform, you can do both.